Link: Supervised learning

What is classification

Classification: the prediction is either correct, or incorrect.

Binary classification

Only two available classes

- E.g. dog/cat picture

In supervised learning, we fit/train the model on training data, and test the model on testing data by comparing the predictions to the true y values

Incorrect/correct are not the same

It often doesn’t tell a complete story. What’s why we need confusion matrix

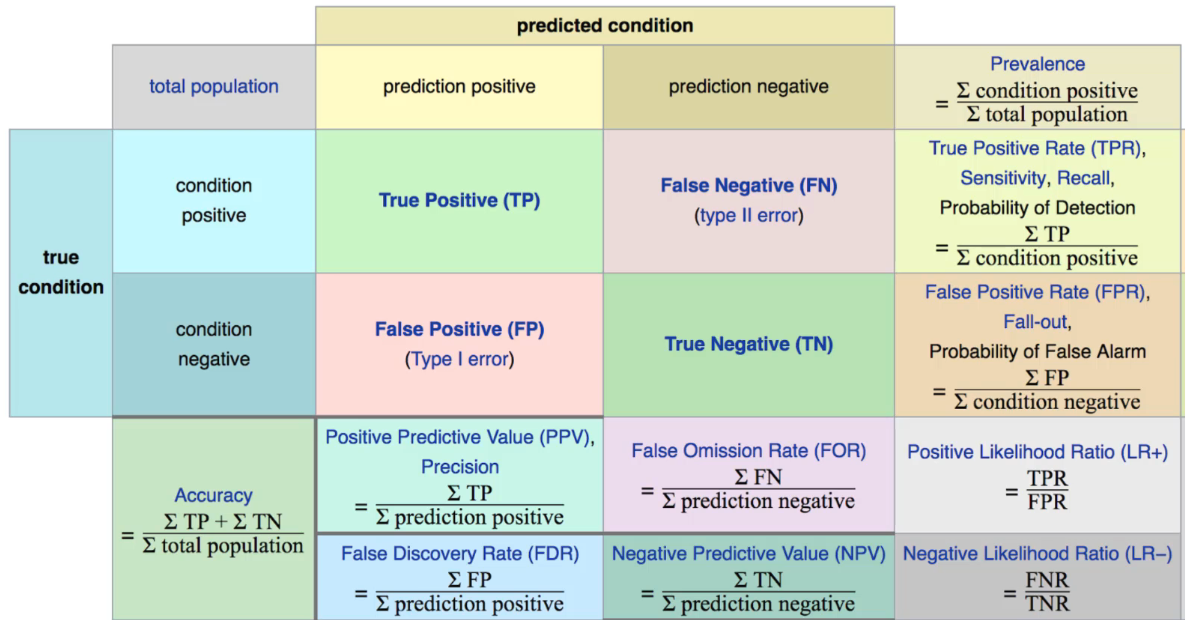

Confusion matrix

The bottom line is that these are all fundamental methods of comparing predicted values vs true values.

The bottom line is that these are all fundamental methods of comparing predicted values vs true values.

Accuracy

Usage

- Useful when the labels are well balanced.

- E.g. same amount of dog/cat pictures

- Not a good choice if it’s unbalanced (e.g. majority is classA while only a small portion is classB). That’s why we need Recall and Precision

Recall

The ability to find all the relevant cases

Precision

The ability to identify only the relevant cases

F1-score

Combine recall and precision to balance these two metrics. It’s the harmonic mean of precision and recall.

Why harmonic mean?

Because harmonic mean will punish the extreme differences while simple average/mean doesn’t. It gives a more fair assessment between precision and recall. E.g. precision=1, recall=0, average=0.5 while F1=0